Category: Six Sigma

New Explainer Video

I have started using Doodly for explainer videos. I have my first creation done, now I need your help. What do I need to adjust to improve it?

All feedback will be appreciated!

Don’t just move the average, understand the spread

Picture the scene; someone in your organisation comes up with a cost-saving idea. If we move the process mean to the lower limit, we can save £’000’s and still be in specification. The technical team doesn’t like it, but they can’t come up with a reason other than “it’ll cause problems”, the finance director loves the idea, and the production manager with one eye on costs says, well if we can save money and be in spec, what’s the problem?

Let me help you.

In this scenario, the technical team may be right. If we assume that your process is in control and produces items with a normal distribution (remember that is the best case scenario!) logic dictates that half of your data is below the average value and half is above. That being the case, what you really want to know is how far from the average the distribution spreads. If the spread is large and you change process to the extreme where the average value sits right on the customer specification limit, half of everything you make will be out of spec. Can you afford a 50% failure rate? What will the impact be on your customers, your reputation, your workload (dealing with complaints).

To work out how much we can move the process, we must first understand how much it varies, and we use a statistical value called the standard deviation to help us. Standard deviation is the average variation from the mean for a sample data set. To work it out, take 20 samples, measure them all 5 times then use a spreadsheet to work out the mean and standard deviation. If that is too much take 10 samples and measure 3 times. Keep in mind that the smaller sample size will give a larger standard deviation. Now take the mean and add 3 x standard deviation. This is the upper limit of your process spread. Subtract 3 x the standard deviation from the process mean to find the lower limit of your process spread. The difference between these two numbers is the spread of your process and will contain 99.7% of the results measured from the process output IF the process is in control and nothing changes.

If moving the mean takes the 3 standard deviation limits of your process outside of the specification, you will get complaints. It could be that the limits are already outside of the specification, in which case moving the average will make a bad situation worse.

It is possible to calculate the proportion of failures likely from a change of average, this done using z-score calculation. I’m not aiming to teach maths, so the important message is that the failure rate can be calculated.

This is the tip of the iceberg with understanding your process. If you don’t know that your process is stable and in control, the spread won’t help you because the process can jump erratically. To improve your process

1. Gain control, make sure the process is stable.

2. Eliminate errors and waste

3. Reduce variation

4. Monitor the process to make sure it stays that way.

The most significant and profitable gains are often from process stability, not from cost-cutting. All cost-cutting does is reduce the pain, think of cost-cutting as a painkiller when you have an infection. It makes it hurt less, but doesn’t stop the infection. You need to stop the infection to feel better.

Now do you want to hurt less or do you want to get better?

Where is the evidence for sigma shift?

This is a longer post than normal, since the topic is one that is debated and discussed wherever six sigma interacts with Lean and industrial engineering.

In lean six sigma and six sigma methodology there is a controversial little mechanism called sigma shift. Ask anyone who has been trained and they will tell you that all sigma ratings are given as short-term sigma ratings and that if you are using long term data you must add 1.5 to the sigma rating to get a true reflection of the process effectiveness. Ask where this 1.5 sigma shift comes from and you will be told with varying degrees of certainty that is has been evidenced by Motorola and industry in general. So should we just accept this?

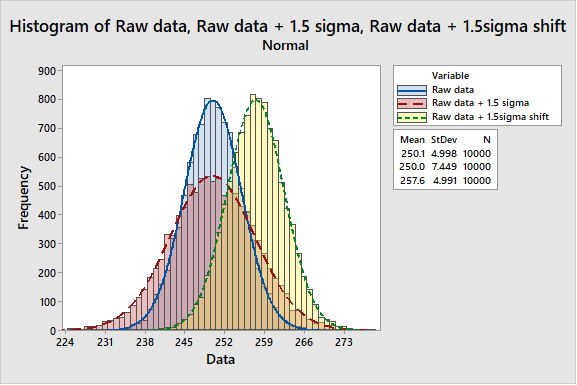

The argument is presented as a shift in the mean by up to 1.5 sigma as shown below.

Isn’t it strange in a discipline that is so exacting for evidence in so many aspects, this idea that the process sigma value must increase by 1.5 if you are using long term data is accepted without empirical evidence? The argument that Motorola or some other corporation has observed it, so it must be true sounds a lot like ‘We’ve always done it that way’. Suddenly this assertion doesn’t feel so comfortable does it? I set out to track down the source of this 1.5 sigma shift and find the source of the data, some study with actual data to prove the theory.

As soon as one starts to ask for data and search for studies, it becomes apparent that the data is not readily available to support this statement. Every paper referring to the 1.5 sigma shift seems to refer to it as ‘previous work’. Several studies came up consistently during my search.

- An article on tolerancing from 1962 by A. Bender (Bender, 1962)

- An article on statistical tolerancing by David Evans (Evans, 1975)

- An article on Six Sigma in Quality Progress from 1993 (McFadden, 1993)

- A treatise on the source of 1.5 sigma shift by Davis R. Bothe (Bothe, 2002)

So why am I focusing on these 4 citations? I perceive a migration across these papers from a simplified method of calculating cumulative tolerances to a theoretical explanation of where the 1.5 sigma shift comes from.

The first article in this series was written in 1962. At this time all calculations were done by hand, complex calculations with the aid of a slide rule. Mistakes were easy to make, and the process was time consuming. This was before electronic calculators, and before computers. Bender was seeking a shortcut to reduce the time taken to calculate tolerance stacks, whilst retaining some scientific basis for their calculation. The proposed solution was to use a fudge factor to arrive at a perceived practical tolerance limit. The fudge was to multiply the variance by 1.5, a figure based on “probability, approximation, and experience”. There is nothing wrong with this approach, however it cannot be called a data driven basis. It should also be understood that the purpose of the 1.5 sigma shift in this case was to provide a window for tolerancing that would give an acceptable engineering tolerance for manufactured parts.

The paper by Evans then provides a critical review of the methods available and uses the Bender example as a low technology method for setting tolerances that appears to work in that situation. One interesting comment in Evans paper is in his closing remarks

“Basic to tolerancing, as we have looked at it here as a science, is the need to have a well-defined, sufficiently accurate relationship between the values of the components and the response of the mechanism.”

Is there evidence that the relationship between the values of the components and the response of the mechanism is sufficiently well defined to use it as a basis for generalisation of tolerancing? I would argue that in most processes this is not the case. Commercial and manufacturing functions are eager to get an acceptable product to market, which is in most cases the correct response to market need. What most businesses fail to do thereafter is invest time, money and effort into understanding these causal relationships, until there is a problem. Once there is a problem, there is an expectation of instant understanding. In his concluding remarks Evans also notes that

“As for the other area discussed, the shifting and drifting of component distributions, there does not exist a good enough theory to provide practical answers in a sufficiently general manner.”

It seems then, that as of 1975 there was inadequate evidence to support the notion of 1.5 sigma shift.

The next paper identified, is an article by McFadden published in Quality Progress in 1993. In this article, MCfadden makes a strong mathematical case that when tolerancing, aiming for a Cp of 2 and Cpk of 1.5 yields a robust process. This is based upon a predicted shift in the process mean of 1.5σ. Under these circumstances, a defect rate of 3.4 defects per million opportunities would be achieved. Again, a sound mathematical analysis of a theoretical change, however there remains no evidence that it is real. Reference is made here to the paper by Bender.

The paper by Bothe, is very similar to the one by McFadden. Both papers express a view that there is evidence for this process shift somewhere, usually with Motorola sources quoted. The articles by Evans, McFadden, and Bothe are all referring to the case where the process mean shifts by up to 1.5 σ with no change in the standard deviation itself. Evans notes that there is no evidence this is a true case.

If you keep searching eventually you find an explanation of the source of 1.5 sigma shift from the author of Six Sigma itself, Mikel J. Harry. Harry addressed the issue of 1.5σ shift in his book Resolving the Mysteries of Six Sigma (Harry, 2003). On page 28 there is the most compelling evidence I have found for the origin of the 1.5σ shift. Harry states in his footnote

“Many practitioners that are fairly new to six sigma work are often erroneously informed that the proverbial “1.5σ shift factor” is a comprehensive empirical correction that should somehow be overlaid on active processes for purposes of “real time” capability reporting. In other words, some unjustifiably believe that the measurement of long-term performance is fully unwarranted (as it could be algebraically established). Although the “typical” shift factor will frequently tend toward 1.5σ (over the many heterogeneous CTQ’s within a relatively complex product or service), each CTQ will retain its ow unique magnitude of dynamic variance expansion (expressed in the form of an equivalent mean offset.”

This statement confirms that there is no comprehensive empirical evidence for the 1.5σ shift. Furthermore, Harry clearly states that the long-term behaviour of a process can only be established through long term study of that process. A perfectly reasonable assertion. There is another change here, in that Harry explains the 1.5 σ shift in terms of an increase in the standard deviation due to long term sampling variations, not as is often postulated in other texts, movements in the sample mean. Harry’s explanation is consistent with one of the central precepts of six sigma, namely that the sampling regime is representative. If the regime is representative, it is clear that the sample mean can vary only within the confidence interval associated with the sample. Any deviation beyond this would constitute a special cause since the process mean will have shifted, yielding a different process. The impact of different samples will be to yield an inflated standard deviation, not a shift of mean. This means that the 1.5sigma shift should be represented as below, not as a shift of the mean

In his book Harry expands on the six sigma methodology as a mechanism for setting tolerances and examining the capability of a process to meet those tolerances with a high degree of reproducibility in the long term. Much of the discussion in this section relates to setting of tolerances using a safety margin M=0.50 for setting of design tolerances.

It seems the 1.5σ shift is a best guess estimation of the long-term tolerances required to ensure compliance with specification. It is not, and never has been a profound evidence-based relationship between long term and short-term data sets. The source of this statement is none other than Mikel J. Harry, stated in his book and reproduced above. Harry has stated that

“…those of us at Motorola involved in the initial formulation of six sigma (1984 – 1985) decided to adopt and support the idea of a ‘1.5σ equivalent mean shift’ as a simplistic (but effective) way to account for the underlying influence of long-term, random sampling error.”

For me it is a significant coincidence that Bender proposed an estimating formula for tolerancing of processes based on 1.5 * √variance of x. Variance is a statistical term. It is defined as follows

The square root of variance is the standard deviation. Or put another way, we can estimate the likely behaviour over time of a process parameter using 1.5 sigma as the basis of variation to allow for shifts and drifts in the sampling of the process.

Given the dynamic nature of processes and process set-up, the methodology employed in process setting can greatly influence the observed result. For example if the process set up instruction requires the process to be inside specification before committing the run, then there may be genuine differences in the process mean. This will be far less likely if the process setup instruction requires the process to be on target with minimum variance.

It seems to me that the 1.5 sigma shift is a ‘Benderized tolerance’ based on ‘probability, approximation, and experience’. If tolerances are set on this basis, it is vital that the practitioner has knowledge and experience appropriate to justify and validate their assertion.

Harry refers to Bender’s research, citing this paper as a scientific basis for non-random shifts and drifts. The basis of Bender’s adjustment must be remembered – ‘probability, approximation and experience’. Two of these can be quantified and measured, what is unclear is how much of the adjustment is based on the nebulous parameter of experience.

In conclusion, it is clear that the 1.5 sigma shift quoted in almost every six sigma and lean six sigma course as a reliable estimate of long term shift and drift of a process is at best a reasonable guess based on a process safety margin of 0.50. Harry has stated in footnote 1 of his book

“While serving at Motorola, this author was kindly asked by Mr Robert ‘Bob’ Galvin not to publish the underlying theoretical constructs associated with the shift factor, as such ‘mystery’ helped to keep the idea of six sigma alive. He explained that such a mystery would ‘keep people talking about six-sigma in the many hallways of our company’.”

Given this information, I will continue to recommend that if a process improvement practitioner wishes to make design tolerance predictions then a 1.5 sigma shift is as good an estimate as any and at least has some basis in the process. However, if you want to know what the long-term process capability will be and how it compares to the short-term process capability, continue to collect data and analyse when you have both long and short term data. Otherwise, focus on process control, investigating and eliminating sources of special cause variation.

None of us can change where or how we are trained, nor can we be blamed for reasonably believing that which is presented as fact. The deliberate withholding of critical information to create mystery and debate demonstrates a key difference in the roots of six sigma compared to lean. Such disinformation does not respect the individual and promotes a clear delineation between the statisticians and scientists trained to understand the statistical basis of the data, and those chosen to implement the methodology. This deliberate act of those with knowledge withholding information, has created a fundamental misunderstanding of the methodology. Is it then any wonder that those who have worked diligently to learn, having been misinformed by the originators of the technique now propagate and defend this misinformation?

What does this mean for the much vaunted 3.4 DPMO for six sigma processes?

The argument for this level of defects is mathematically correct, however the validity of the value is brought into question when the objective evidence supporting the calculation is based in supposition not process data. I think it is an interesting mathematical calculation, but if you want to know how well your process meets the specification limits, the process capability indices Cp and Cpk are more useful. After all, we can make up any set of numbers and claim compliance if we are not concerned with data, facts and evidence.

This seems to be a triumph of management style over sense and reason, creating a waste of time and effort through debating something that has simply been taught incorrectly, initially through a conscious decision to withhold essential information, later through a failure to insist on data, evidence and proof.

However, if we continue to accept doctrine without evidence can we really regard ourselves and data driven scientists? Isn’t that the remit of blind faith? It is up to six sigma teachers and practitioners to now ensure this misinformation is corrected with all future teachings and to ensure that the 1.5 sigma shift is given its proper place as an approximation to ensure robust tolerances, not a proven process independent variation supported by robust process data.

Bibliography

Bender, A. (1962). Benderizing Tolerances – A Simple Practical Probability Method of Handling Tolerances for Limit-Stack-Ups. Graphic Science, 17.

Bothe, D. R. (2002). Statistical Reason for the 1.5σ Shift. Quality Engineering, 14(3), 479-487. Retrieved 2 22, 2018, from http://tandfonline.com/doi/full/10.1081/qen-120001884

Evans, D. H. (1975). Statistical Tolerancing: The State of the Art, Part III. Shifts and Drifts. Journal of Quality Technology, 72-76.

McFadden, F. (1993). Six Sigma Quality Programs. Quality Progress, 26(6).

Lean? Six sigma? TPS? How about just improving the customer experience.

There are so many models out there for process improvement that I fear the reason for having the models gets lost. You can’t see the wood for the trees. How do we get back to seeing what is in front of us?

What lies at the heart of all of the improvement methodologies?

I would argue that the reason for doing any of this is the same; a basic desire to improve the business. The next question is to improve what? Why improve at all? All businesses are there to service the needs of their customers, whether that need is for doughnuts, cars, phones, accountancy services, medical aid, it doesn’t matter. If we can find a way to service the customer’s needs better, then perhaps we can find a way to make everyone’s job more secure and grow the business. If we can make more money along the way even better.

So why do we obsess about all these different methods?

Everyone tries to understand how someone else, who we perceive is better at something, does it. We all study someone, we are taught subjects in school, how to answer questions, how to solve problems, how to make things better. Unfortunately, our education systems teach us to copy, imitate rather than innovate, so we look for models and systems to copy since that is our conditioning.

Therein lie the seeds of ruin for many improvement projects. We go, we study, we focus on what, but we fail to understand why. The real question for improvement is, why do it? Many companies who engage in process improvement, lean or six sigma projects do so to reduce costs. That cost reduction is often accompanied by job losses, which works in the short terms, but destroys trust between workers and management, and makes future improvement projects almost impossible. This is because there is an internal, short term focus for the business. Management are changing every 3 to 5 years, every new set of management focus on the perceived deficiencies of their predecessor, then proceed to change the direction to make things better. W Edwards Deming identified these factors in his seven deadly diseases. Short term goals, lack of constancy of purpose, job hopping by management, performance reviews, focus on visible figures, excessive medical costs, excessive legal costs.

American Management thinks that they can just copy from Japan. But they don’t know what to copy

W Edwards Deming

How do we succeed?

As Simon Sinek observes, we must start with why. Why do we want to improve? Is it to better serve our customers or to make more money? As United Airlines have recently shown, just saying that your customers are a priority isn’t enough. If customer service was at the heart of their principles, that video of security guards dragging a paying passenger from a flight would not have been possible. To truly succeed, a business must focus on improving the aspects of their product or service their customers value most. It must not be done to simply improve margin, but to reduce the level of defects, improve customer satisfaction, eliminate the opportunity for defects, reduce lead time, reduce waste.

This focus will lead the business to look at the process for creating customer value; reducing defects, eliminating the opportunity for defects, reducing waste all deliver lower costs for the business and better service for the customer. Reducing waste and defects means that less time is wasted on defect correction and replacement, this gives a shorter lead time and lower costs. Determining a long-term strategy, and setting in place a review process to ensure the strategy is sustained will ensure constancy of purpose. Focusing on these factors and ensuring that all the changes are sustainable for the long term may be harder, but is ultimately much better for the business.

In summary, focus on your customer needs, plan for long term success, and make changes that are consistent with your long-term goals. Don’t copy what others have done, understand the link between their long-term goals and improvement activities. Once this is understood for their business, focus on your own business and use only the tools and techniques that support your own long-term goals AND your customer’s needs. If you ever find a conflict between these demands, always choose your customer’s needs; long-term success can only come from repeat business, and repeat business only comes from satisfied customers.

Profit in business comes from repeat customers, customers that boast about your project or service, and that bring friends with them.

W. Edwards Deming

Lean and Six Sigma; Is it a choice or collaboration?

I regularly see posts and discussion points asking what are the differences between Lean and Six Sigma, should Lean or Six Sigma be used first, and if Lean is better than Six Sigma. I find it really puzzling that people involved in continuous improvement still have this debate, especially since the two techniques are not alternative approaches, but are complementary techniques.

Let’s take a quick look at these three questions

- What are the differences between Lean and Six Sigma?

Lean is the practical application of the Toyota Production System. Lean started out as a simple way to ensure that business were focused on the things that customers value and ensuring the activities in the business are as efficient as possible at delivering customer value. Lean focuses on process velocity, reducing waste in all forms and eliminating non value added activities. There is a bias to immediate action Lean, to ensure that waste is removed in the shortest possible time.

Six Sigma was developed by Motorola to enable effective competition against high quality imports from Japan. Six Sigma is a highly structured process aimed at understanding and reducing variation to ensure that the process always delivers the product or service required by the customer. Six Sigma requires statistical evidence and proof of performance, with a mantra of show me the evidence, the ultimate aim of which is to ensure the product delivered is absolutely consistent and within specification. Six Sigma has a bias to understanding the customer and only acting on statistically valid evidence.The aim of both Lean and Six Sigma is to reduce waste, particularly defects, improve process performance and thereby increase customer satisfaction. Lean aims to achieve this by identifying and removing waste and non-value added activities. Six Sigma aims to achieve this by ensuring the customer needs are fully understood and the process is capable of delivering the required product consistently.

- Which Should be used first, Lean or Six Sigma

My perception is that if a practitioner is more comfortable with Lean they will use the lean tools first and if they are more comfortable with Six Sigma they will apply Six Sigma first. Let’s phrase that question differently and see if it still makes sense.

Do you want to reduce waste, defects, and lead time for your process through Lean, or do you want to reduce waste, defects and lead time for your process through Six Sigma? I believe almost every production manager and senior executive would ask one more question; why do I have to choose?

Lean and Six Sigma processes are valuable and there is a strong crossover in the skills. For example if final checking of a process is unnecessarily complicated and yielding too many defects, would you want to be certain that the test method was correctly identifying defects? Of course, therefore we should use Six Sigma first right, because that is where we find Gauge reproducibility and repeatability tools? However, would you want to eat until that was done before simplifying the process? If we give in to the tyranny of “or” we have to choose. What if we choose and instead, and use different groups in the team for both exercises. We need to make sure they communicate effectively, but if the tasks are perceived as of equal importance and we promote a collaborative approach, we can get both done in parallel. That way we eliminate the non-value added steps and ensure that we can separate good parts from bad parts.

If we start with Lean we end up with a simple process (good) without knowing if our output performance is due to the test method, operator or parts (bad). If we start with Six Sigma, we know where the variation finished part performance comes from (good), but the process is still very complicated and we still can’t clearly see what needs to change (bad). If we apply both techniques in parallel we get a simplified process (good) with clarity of process performance (good). Applying both in parallel gives the best results. - Is Lean better than Six Sigma?

Is your car engine more important than the steering? Neither works well without the other, having a car that can go fast, but is hard to direct is not going to work, equally having an excellent capability to direct the car, but nothing to make it move is also going to fail.

Lean and Six Sigma are complementary and whilst each is an excellent tool in it’s own right, when used together these two tools yield results far in excess of what each can give alone. Neither is better than the other, they are different and they are complimentary. Lean or six sigma is not a binary choice, it is a comprehensive toolkit for solving problems.

Just as an engineer uses different tools and techniques for different structures, so Lean and Six Sigma should be applied when the tools and techniques are appropriate to the task in hand.

So my final message on this would be don’t worry about whether the improvement process should be lean or six sigma, instead worry about whether the tool selected to improve the process will yield the most effective solution. In other words don’t get trapped by the tyranny of “or” instead be empowered by the freedom of “and”.